|

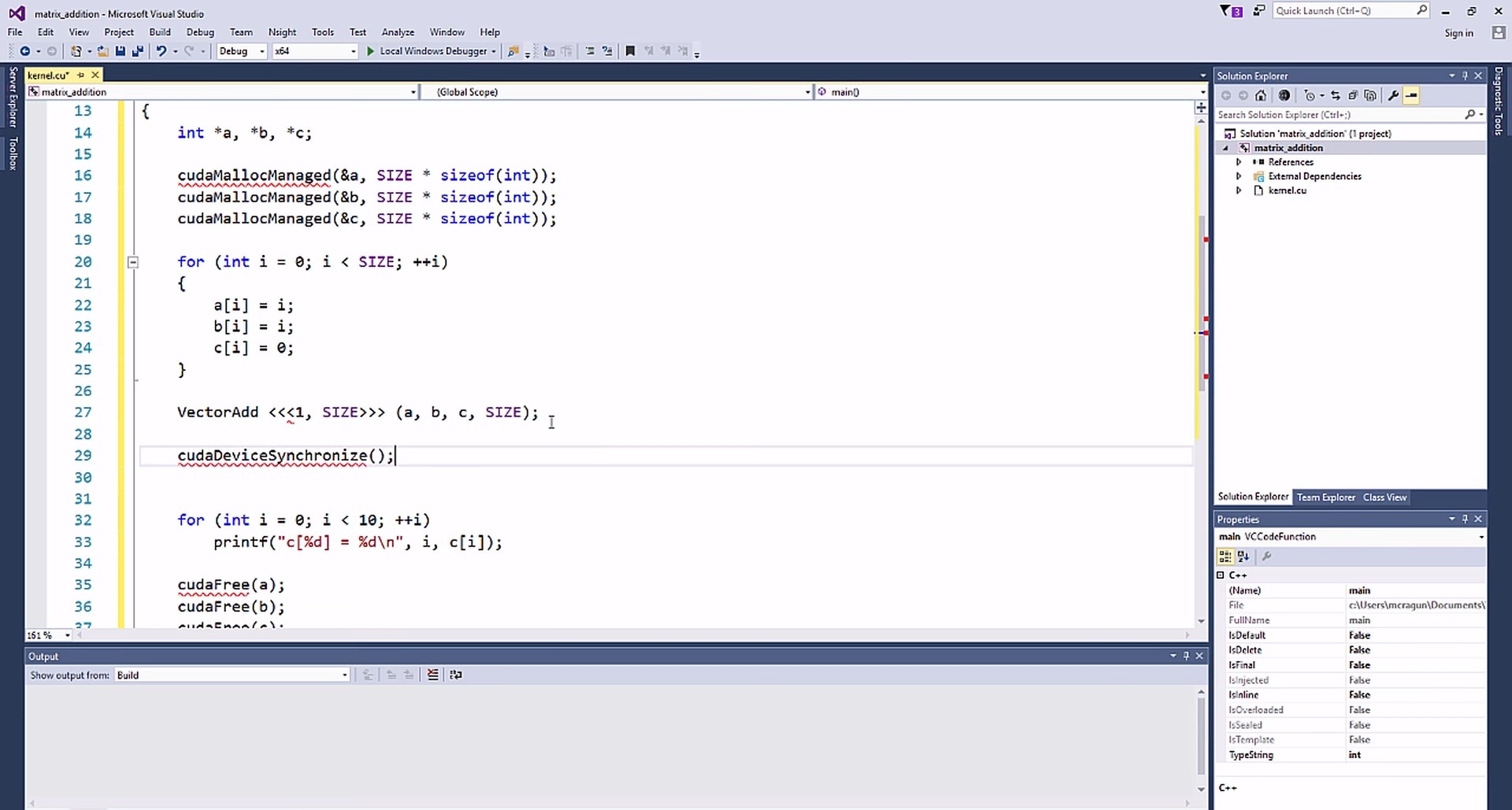

Currently we have python36 through python39. The general purpose Anaconda environments are located in /koko/system/anaconda/envs/. export PATH=/koko/system/anaconda/envs/python38/bin:$PATH When possible, we can add additional ones on request to run python, see Using Python on CS Linux Machine. For most purposes, it’s sufficient to add the appropriate environment to your path, e.g. Anaconda is a packaged environment for Python. Instead use the most recent version of anaconda that you can. DO NOT simply type “python.” On many systems that will give you an older version of Python, without access to the GPU-related tools. Pytorch, Tensorflow, and other tools are installed in anaconda environments. The most common tool is Pytorch, but we also have some usage of Tensorflow. We would be happy to support users with other languages, but most tools currently installed are for Python. In CS department, GPU-based work is done primarily in Python. See the section on containers, below, for a way to use older versions of software. Ubuntu 20 supports Cuda 11, but no older versions. You can ask to install previous versions on your system. Many users have existing code that requires older versions. We install the latest version of Cuda on all systems with appropriate GPUs. Work in our department uses primarily Python. Cuda has bindings for Python and most other major programming languages. OpenCL, but Cuda is used most commonly here. Among other things, it provides a uniform interface that applies to many different models of GPU. The Cuda Toolkit is a set of APIs from Nvidia, designed to make it easy to write programs using GPUs. Slurm is not required for Desktops machines. No GPUs will be available without Slurm on iLab Servers. To avoid having one user dominate our limited GPUs, when running GPU jobs, we require that you use Slurm Job Scheduling software to run your jobs on iLab Servers.

However the memory is limited (typically 12 GB on public systems), so in practice only one or two can use them at a time. GPUs can be shared by more than one user. Desktop systems generally have one smaller GPU that is still Cuda-capable, and thus could be used for courses in GPU programming or preliminary software development. Most of our research and larger instructional systems (servers) have 8 Cuda-capable Nvidia GPUs. Most research computing in CS department, and much of our instruction, uses GPUs with Nvidia’s Cuda software, and applications such as Pytorch and Tensorflow.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed